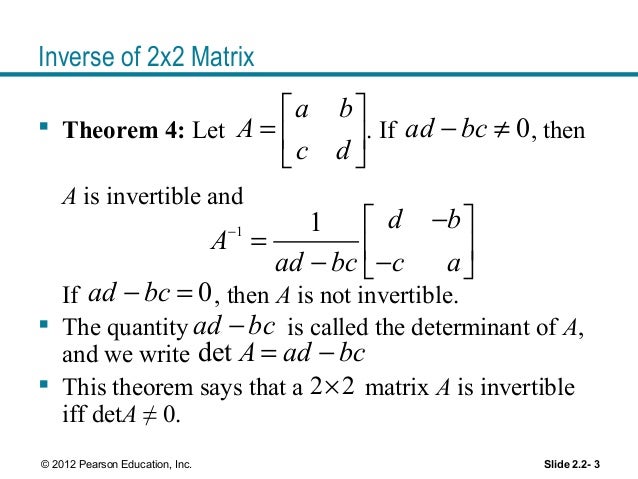

These factors are in the form of returned values from LUDecomposition. If the first argument in the calling sequence is a list, then the elements of the list are taken as the Matrix factors of the Matrix A, due to some prefactorization. The subs method indicates that the input is already triangular, so only the appropriate forward or back substitution is done. The LU and Cholesky methods use the corresponding LUDecomposition method on the input Matrix (if not already prefactored) and then use forward and back substitution with a copy of the identity Matrix as a right-hand side. The polynom method uses an implementation of fraction-free Gaussian elimination. The univar method uses an evaluation method to reduce the Matrix to a Matrix of integers, then uses `Adjoint/integer`, and then uses genpoly to convert back to univariate polynomials. The integer method calls `Adjoint/integer` and divides it by the determinant. The complex and rational methods augment the input Matrix with a copy of the identity Matrix and then convert the system to reduced row echelon form. If m is included in the calling sequence, then the specified method is used to compute the inverse (except for 1 x 1, 2 x 2 and 3 x 3 Matrices where the calculation of the inverse is hard-coded for efficiency). If A is a non-square m x n Matrix, or if the option method = pseudo is specified, then the Moore-Penrose pseudo-inverse X is computed such that the following identities hold: A -1 = I, where I is the n x n identity Matrix.If A is a nonsingular n x n Matrix, the inverse A -1 is computed such that A If A is non-square, the Moore-Penrose pseudo-inverse is returned. If A is recognized as a singular Matrix, an error message is returned.

The MatrixInverse(A) function, where A is a nonsingular square Matrix, returns the Matrix inverse A -1. (optional) constructor options for the result object (optional) equation of the form output=obj where obj is 'inverse' or 'proviso' or a list containing one or more of these names selects the result objects to compute (optional) equation of the form conjugate=true or false specifies whether to use the Hermitian transpose in the case of prefactored input from a Cholesky decomposition (optional) equation of the form methodoptions=list where the list contains options for specific methods (optional) equation of the form method = name where name is one of 'LU', 'Cholesky', 'subs', 'integer', 'univar', 'polynom', 'complex', 'rational', 'pseudo', or 'none' method used to factorize the inverse of A MatrixInverse( A, m, mopts, c, out, options ) We say that these invertible linear transformations “preserve structure.” And we say that the two vector spaces are “structurally the same.” The precise term is “isomorphic,” from Greek meaning “of the same form.” Let us begin to try to understand this important concept.Compute the inverse of a square Matrix or the Moore-Penrose pseudo-inverse of a Matrix The answers obtained in the second vector space can then be translated back, via the inverse linear transformation, and interpreted in the setting of the first vector space. When two different vector spaces have an invertible linear transformation defined between them, then we can translate questions about linear combinations (spans, linear independence, bases, dimension) from the first vector space to the second. In this subsection we are going to begin to roll all these ideas into one.Ī vector space has “structure” derived from definitions of the two operations and the requirement that these operations interact in ways that satisfy the ten properties of Definition VS. Finally, an invertible linear transformation is one that can be “undone” - it has a companion that reverses its effect. The defining properties of a linear transformation require that a function “respect” the operations of the two vector spaces that are the domain and the codomain ( Definition LT). Other definitions are built up from these ideas, such as bases ( Definition B) and dimension ( Definition D). Many of our definitions about vector spaces involve linear combinations ( Definition LC), such as the span of a set ( Definition SS) and linear independence ( Definition LI). A vector space is defined ( Definition VS) as a set of objects (“vectors”) endowed with a definition of vector addition ($+$) and a definition of scalar multiplication (written with juxtaposition).